403

Sorry!!

Error! We're sorry, but the page you were looking for doesn't exist.

Achieve More With Red Hat Openshift 4.21

(MENAFN- Mid-East Info) Red Hat, the world's leading provider of open source solutions, announced that Red Hat OpenShift 4.21, based on Kubernetes 1.34 and CRI-O 1.34, is now generally available. Together with Red Hat OpenShift Platform Plus, this release demonstrates the company's continued commitment to delivering the trusted, comprehensive, and consistent application platform that enterprises rely on for production workloads across the hybrid cloud without compromising on security.

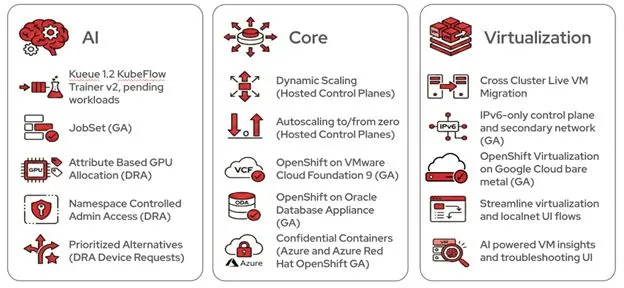

This release emphasizes running AI training jobs, containerized microservices, and virtualized applications on the same infrastructure with the same operational model. With OpenShift 4.21, you can simultaneously modernize existing IT infrastructure and accelerate AI innovation on a single, cost-efficient platform that scales automatically based on real-time business demand. Imagine a large financial institution that needs to maintain legacy virtual machines (VMs) for core banking while also training new AI models for fraud detection. Previously, these two worlds lived in different systems, creating“silos” and wasted costs. But with OpenShift 4.21, this firm can run both on the same infrastructure. Using the new Dynamic Resource Allocation (DRA) operator, they can even prioritize high-end GPUs for AI training during the day, but automatically shift those resources or scale them to zero at night to save money. Additionally, they can move active VMs between data centers with zero downtime, helping to ensure banking services stay online even during hardware maintenance. Whether you deploy OpenShift as a self-managed platform, or consume it as a fully managed cloud service, you get a complete set of integrated tools and services for cloud-native, AI, virtual and traditional workloads alike. This blog covers key innovations in OpenShift 4.21 across AI, core platform capabilities, and virtualization. For complete details, see the OpenShift 4.21 release notes. AI: Artificial intelligence has become a cornerstone of modern industry, driving breakthroughs in everything from personalized healthcare to autonomous systems. However, as AI models grow in complexity, the underlying infrastructure must evolve to handle massive computational demands efficiently. In this release of OpenShift, we continue to add AI features into the platform to support production AI workloads at scale. Streamline AI workloads with Red Hat build of Kueue v1.2 With OpenShift 4.21, Red Hat build of Kueue v1.2 delivers two capabilities that matter for AI teams at scale:

This release emphasizes running AI training jobs, containerized microservices, and virtualized applications on the same infrastructure with the same operational model. With OpenShift 4.21, you can simultaneously modernize existing IT infrastructure and accelerate AI innovation on a single, cost-efficient platform that scales automatically based on real-time business demand. Imagine a large financial institution that needs to maintain legacy virtual machines (VMs) for core banking while also training new AI models for fraud detection. Previously, these two worlds lived in different systems, creating“silos” and wasted costs. But with OpenShift 4.21, this firm can run both on the same infrastructure. Using the new Dynamic Resource Allocation (DRA) operator, they can even prioritize high-end GPUs for AI training during the day, but automatically shift those resources or scale them to zero at night to save money. Additionally, they can move active VMs between data centers with zero downtime, helping to ensure banking services stay online even during hardware maintenance. Whether you deploy OpenShift as a self-managed platform, or consume it as a fully managed cloud service, you get a complete set of integrated tools and services for cloud-native, AI, virtual and traditional workloads alike. This blog covers key innovations in OpenShift 4.21 across AI, core platform capabilities, and virtualization. For complete details, see the OpenShift 4.21 release notes. AI: Artificial intelligence has become a cornerstone of modern industry, driving breakthroughs in everything from personalized healthcare to autonomous systems. However, as AI models grow in complexity, the underlying infrastructure must evolve to handle massive computational demands efficiently. In this release of OpenShift, we continue to add AI features into the platform to support production AI workloads at scale. Streamline AI workloads with Red Hat build of Kueue v1.2 With OpenShift 4.21, Red Hat build of Kueue v1.2 delivers two capabilities that matter for AI teams at scale:

-

Support for KubeFlow Trainer v2 in Red Hat OpenShift AI 3.2: Instead of managing separate resources for each machine learning (ML) framework, data scientists now work with a single TrainJob API. They focus on model code while platform teams define infrastructure through training runtimes.

Visibility API for pending workloads: Before this, batch jobs sat in queues with no insight into position or wait time. Users couldn't tell whether they were first or hundredth in line. Administrators had no way to spot resource bottlenecks.

-

Now both sides see what's happening. Users get estimated start times. Administrators identify where specific resources, like GPU types, are oversubscribed. The queue isn't a black box anymore.

Legal Disclaimer:

MENAFN provides the

information “as is” without warranty of any kind. We do not accept

any responsibility or liability for the accuracy, content, images,

videos, licenses, completeness, legality, or reliability of the information

contained in this article. If you have any complaints or copyright

issues related to this article, kindly contact the provider above.

Comments

No comment